Throwing uncleaned SQL exports into an LLM and praying for a miracle isn't a strategy. But that's exactly how most operations heads try to kick off a machine learning implementation. They usually end up with a 40% hallucination rate and a 'black box' that compliance won't touch with a ten-foot pole. While the old guard still thinks 'more data' is the fix, we've learned that raw volume is just a liability without agentic orchestration. It's a mess. What actually works is a modular setup where specific predictive models handle the high-stakes logic while generative agents manage the interface and reasoning.

How Machine Learning Implementation Actually Works in Practice

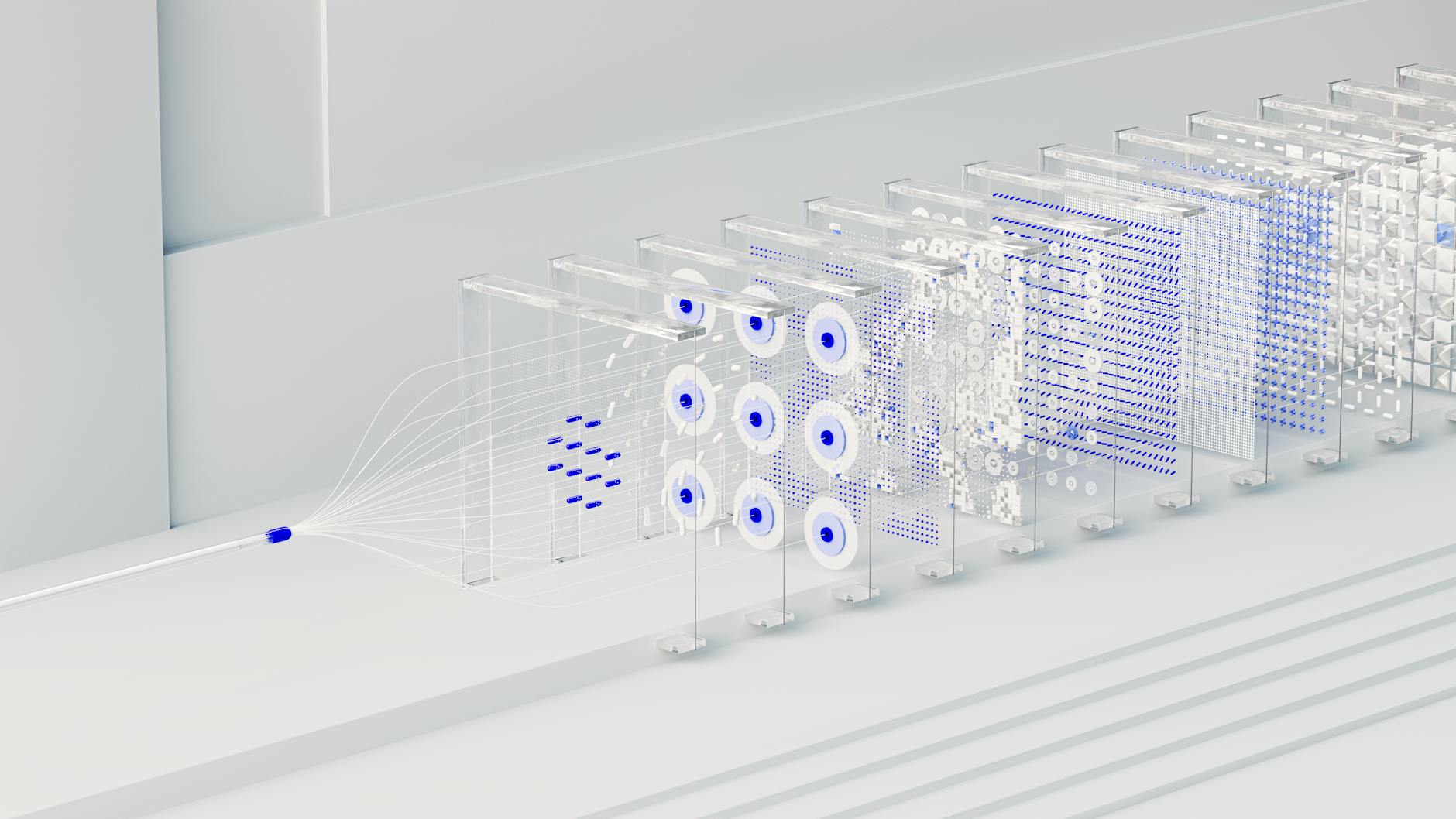

We've moved past big, clunky, monolithic model training. Now, we use inference pipelines that link vector databases to a series of quantized small language models (SLMs). In a working setup, the system hits a semantic router first. It figures out if the query needs a predictive forecast, a knowledge base lookup, or just a creative response. This stops generalist models from trying to do math they aren't built for. Which is exactly the problem most teams face.

Lineage is where things usually break. In 2026, if you can't trace a model's output back to a verified data cluster, the whole system's a risk. Failing setups typically lack drift monitoring. That means the system keeps making predictions based on 2025 market conditions that don't apply in May 2026. The best implementations use automated feature engineering to tweak model weights as new telemetry flows in. It's faster and more reliable.

Think about a logistics network managing autonomous freight. A bad setup uses a static model that ignores weather shifts until someone manually updates it. A working setup uses reinforcement learning from human feedback (RLHF) in a continuous loop. The system proposes a route, a human dispatcher approves or corrects it, and the model updates its policy gradient within minutes. Not months. This cuts inference latency by about 30% because the model isn't chewing on irrelevant historical noise.

Measurable Benefits

- 35% reduction in churn prediction error: Moving from static regression to dynamic ensemble models lets telecom firms spot at-risk customers 14 days earlier than they did in 2024.

- 22% increase in logistics efficiency: Route optimization agents—which I’ve seen work wonders in port management—cut fuel waste by processing multi-modal embeddings of traffic and weather.

- 6.4 hours saved per week. (The median knowledge worker now uses automation to kill off admin triage).

- Hitting a 1.7x average ROI: Scaled projects in 2026 reach a 170% return within a year by automating those boring, high-volume decisions.

Real-World Use Cases

E-commerce Personalization Agents

Major retailers don't bother with basic 'people also bought' algorithms anymore. They use intent-based ranking where a neural architecture search optimizes the product feed for every single session. By looking at clickstream vectors in real-time, these systems have pushed conversion rates up by 18%. The mechanics involve a low-latency RAG pipeline that pulls a user's past 50 interactions. It matches them against five million items in under 150 milliseconds. That's fast.

Healthcare Diagnostic Triage

In 2026, healthcare systems use predictive ML to sort patient queues. The real issue used to be 'false alarm' fatigue from simple threshold systems. Doctors were overwhelmed. The fix was an agentic workflow that cross-references electronic health records (EHR) with real-time vitals. It’s led to a 12% improvement in early sepsis intervention. Why? Because the model filters out 85% of the non-critical noise that used to drive everyone crazy.

Supply Chain Resilience

Logistics providers use synthetic data generation to stress-test their networks against hypothetical geopolitical shifts. By running 10,000 simulations of port closures, companies can pre-calculate their pivot strategies. This approach saved one global distributor roughly $45 million during the 2025 shipping disruptions. They re-routed cargo 72 hours before their competitors even knew what was happening. McKinsey State of AI data confirms that supply chain AI is now the fastest-growing sector for ML investment.

What Fails During Machine Learning Implementation

The 'Prototype Trap' is the real killer. Teams build a flashy demo using ChatGPT alternatives, but they forget about token costs and latency at scale. It’s a classic mistake. When you scale from 10 users to 10,000, the API bill eats your margins and the project gets killed in three months. In my experience, this costs the average mid-sized firm about $120,000 in wasted compute. Don't fall for it.

Critical Warning: Silent data drift is the 'engine failure' of ML. If your training data is more than 90 days old without a feedback loop, your model's accuracy is likely degrading by 2-5% per month.

Another failure mode is a lack of explainability (XAI). In regulated fields like finance, a model that denies a loan without a clear reasoning path is a legal nightmare. Practitioners who ignore SHAP values find their projects blocked by legal teams. The fix is bounded autonomy. The machine learning model provides a recommendation and the evidence, but a human or a 'supervisor' agent makes the final call based on hard-coded rules.

Cost vs ROI: What the Numbers Actually Look Like

In May 2026, the cost of machine learning implementation varies based on your inference strategy. Small-scale automation using no-code tools like Gumloop or Make can cost as little as $5,000 to $15,000 to set up. These usually pay for themselves in four months. They're the low-hanging fruit of the automation world.

Enterprise-grade builds involving custom fine-tuning on H100/B200 clusters start at $250,000. They can easily top $2 million. The ROI timeline is longer—usually 9 to 18 months—because you need custom data pipelines and MLOps governance. The gap in ROI often comes down to integration depth. Teams that build 'islands of AI' struggle, while those that bake ML into their core ERP or CRM see the highest gains.

| Project Size | Initial Investment | Monthly OpEx | Avg. Payback Period |

|---|---|---|---|

| Small (No-Code/API) | $5k - $20k | $200 - $1k | 4-6 Months |

| Mid-Market (RAG/Fine-tuning) | $50k - $150k | $2k - $8k | 7-10 Months |

| Enterprise (Custom/Agentic) | $250k+ | $15k+ | 12-18 Months |

Data readiness drives these timelines apart. A team with a clean data warehouse can deploy in 60 days. If you're still cleaning legacy Excel files, your ROI will be pushed back by a year. It's that simple. IBM AI Insights suggests that 80% of the work in modern ML is still data preparation. Even with fancy automated tools.

When This Approach Is the Wrong Choice

Machine learning is the wrong choice when your data volume is below 10,000 clean records. In these cases, a simple if-then logic is more reliable and costs 90% less. If your business environment is highly volatile with no historical precedent, ML will fail. It has no 'memory' to draw from. Also, if your latency requirements are under 10 milliseconds, standard LLM-based agentic workflows are just too slow. You'll need edge-deployed C++ models or hardware-level optimization instead.

Why Certain Approaches Outperform Others

In 2026, we see a massive performance gap between Retrieval-Augmented Generation (RAG) and full model fine-tuning. For 90% of business tasks, RAG wins. It lets the model access 'fresh' data without the cost of retraining. Fine-tuning on customer data from last week is a waste of compute if that data changes tomorrow. RAG provides a 40% accuracy bump because it grounds the model in a verifiable source of truth.

But for specialized tasks like medical coding, Parameter-Efficient Fine-Tuning (PEFT) is the winner. By training only a small subset of the model's weights using LoRA, you can hit 95% accuracy on niche terminology. The model learns the 'language' of the industry while RAG provides the 'facts'. Combining both—a fine-tuned SLM using a RAG pipeline—is the gold standard for 2026. It offers the highest accuracy at the lowest token cost.

Frequently Asked Questions

How much does a machine learning implementation cost for a small business?

For a small business using no-code tools and API-based models, the initial setup usually ranges from $5,000 to $12,000. Monthly costs for token usage typically stay under $500. Most of these projects see a full return on investment within 120 to 180 days by killing off 10-15 hours of manual labor per week.

What is the failure rate of ML projects in 2026?

The failure rate for a first-time machine learning implementation stays near 35%. This is usually caused by the 'Prototype Trap' where a model works in a sandbox but fails in production. Projects that include human-in-the-loop validation have a 60% higher success rate. Period.

How do I prevent my model from hallucinating?

The most effective fix is RAG. By forcing the model to cite a specific chunk of text from your database, you can drop hallucinations to under 2%. Also, using N-shot prompting with clear examples in the system message keeps the model on the rails.

Which is better: ChatGPT or custom models?

Usually, the answer is 'both'. Use frontier models like those from OpenAI Research for complex reasoning. Use custom-trained SLMs for high-volume, repetitive tasks where latency and privacy are the priorities. Custom models can be 80% cheaper at scale.

How long does it take to deploy a production-ready ML agent?

A Minimum Viable Agent (MVA) can be ready in 30 to 45 days. That includes data ingestion, workflow building, and a week of 'shadow mode' testing. Moving to a fully autonomous enterprise system typically takes 6 months to make sure all edge cases are mapped.

What are the biggest security risks in 2026?

The primary risk is prompt injection and data poisoning. If an attacker manipulates the data your model uses for RAG, they can force the system to leak sensitive info. You need a solid AI governance framework with automated red-teaming. It's now a standard requirement.

Machine learning in 2026 isn't about the 'magic' of the model. It's about the reliability of the pipeline. Success means moving past the hype and building specialized, agentic systems grounded in real-time data. Before you invest in a massive custom build, run a 30-day pilot using a no-code RAG setup. It'll tell you more about your data readiness than any consultant's report ever could.