Too many teams start by chasing a complex model architecture. They expect high-precision results to just turn into business value. It doesn't work that way. What they get instead is a 'black box' that breaks the moment real-world data shifts by 5%, costing the organization upwards of $200,000 in wasted compute and engineering hours. This failure occurs because they skip the semantic data alignment phase that determines 80% of the outcome. This machine learning implementation guide focuses on the transition from experimental scripts to durable, production-ready automation that survives the volatility of 2026 markets.

How Predictive Modeling Actually Works in Practice

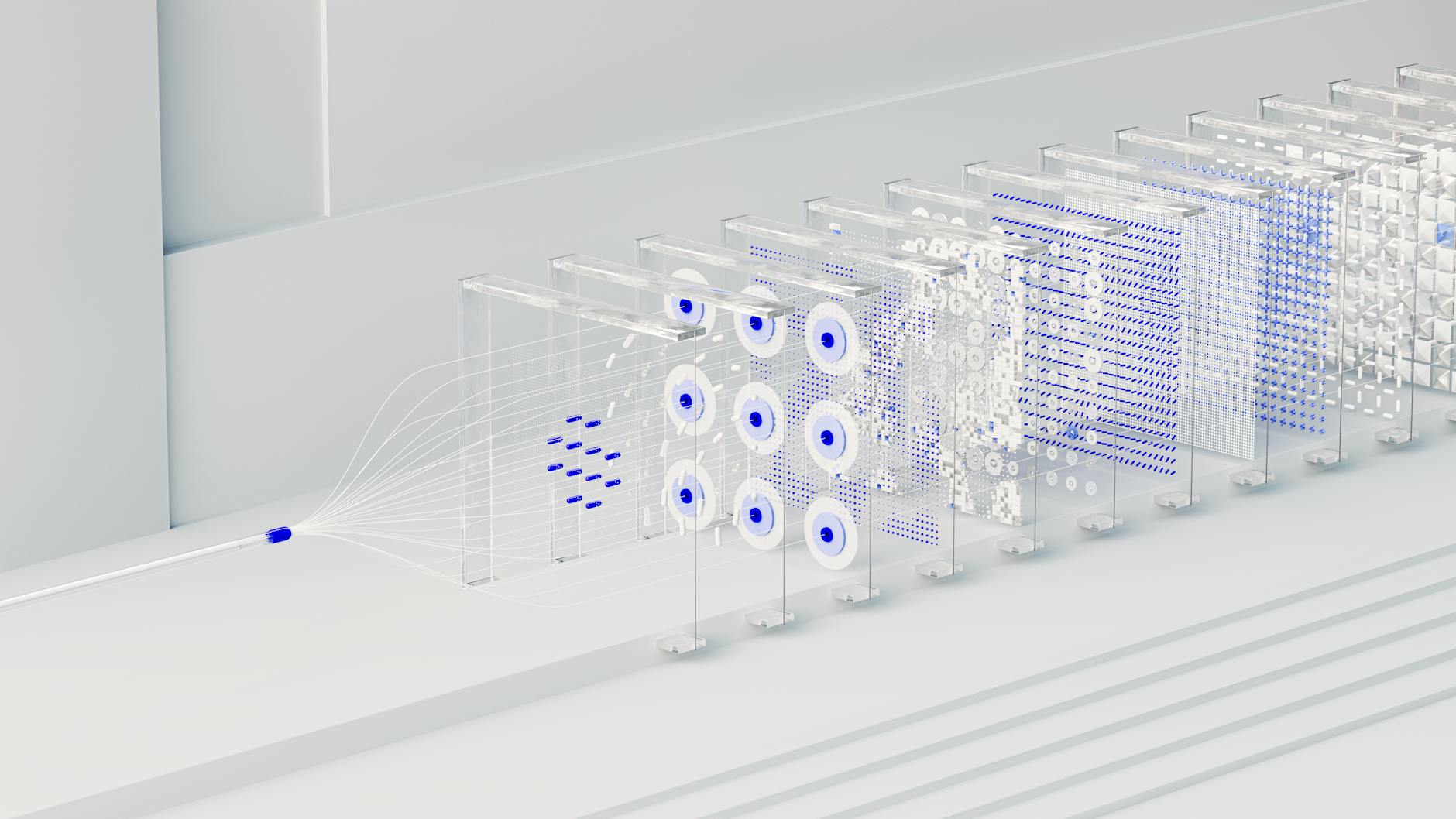

In a real-world 2026 setup, the model itself's usually the least expensive component. The heavy lifting happens in the feature store and the orchestration layer. A working setup treats data as a continuous stream rather than a static snapshot. When a logistics network uses predictive routing, the system doesn't just look at historical traffic; it pulls real-time telemetry from edge devices and cross-references it with weather patterns via vector embeddings.

Implementation breaks when teams don't account for inference latency. If your model takes 3 seconds to predict a customer's next click, the user's already left the page. Honestly, most setups fail here. A failing setup usually involves a monolithic architecture where every request triggers a full model wake-up. A successful one uses model quantization and asynchronous processing to keep response times under 100ms.

The global machine learning market has reached $127.94 billion in 2026, yet 85% of projects still fail to move beyond the pilot stage due to poor data quality and lack of operational readiness.

Measurable Benefits: Quantifiable Performance Deltas

- 40% reduction in operational overhead for financial reconciliation tasks by replacing manual audits with autonomous agents.

- 22% increase in customer retention rates through predictive churn modeling.

- We've seen a 15% decrease in energy consumption for large-scale data centers (depending on your cooling setup) using neural network optimization to manage loads in real-time.

- Localized content now reaches the market 60% faster when you use fine-tuned LLMs for regional sentiment analysis and translation.

Real-World Use Cases for AI Automation

E-commerce: Dynamic Margin Optimization

Retailers have ditched simple rule-based pricing. By deploying gradient-boosted decision trees, platforms analyze competitor pricing, local inventory levels, and individual user price sensitivity in real-time. This results in a 12% lift in gross margin. It works by capturing 'willingness to pay' during peak demand periods without triggering mass cart abandonment.

Healthcare: Diagnostic Imaging Triage

In modern healthcare systems, machine learning acts as a filter for radiologists. Models trained on synthetic data identify anomalies in MRI scans with 94% accuracy, flagging urgent cases for immediate human review. This triage system reduces the average wait time for critical diagnoses from 14 hours to 45 minutes. That's a life-saving difference.

Logistics: Predictive Maintenance for Last-Mile Delivery

Logistics networks use recurrent neural networks (RNNs) to monitor vehicle health. By analyzing vibration data and fuel consumption patterns, the system predicts engine failures 500 miles before they occur. This saves an average of $4,200 per vehicle annually in avoided emergency repairs and towing costs.

Critical Failure Modes: Why 85% of Projects Stall

Data drift kills projects. This happens when the statistical properties of the input data change, making the model's training obsolete. For example, a fraud detection model trained on 2024 spending patterns'll fail in 2026 as consumer behavior moves toward biometric-first payments. The fix is a solid MLOps pipeline that triggers automated retraining when performance dips below a pre-defined threshold (usually 2-3%).

Critical Warning: Ignoring algorithmic transparency can lead to massive regulatory fines. In 2026, systems must be able to provide a 'reason code' for every automated decision that affects a consumer's financial or health status.

Another common failure is the 'Cold Start' problem. Teams try to build custom models without enough labeled data. This results in a model that hallucinates or produces biased outputs. The cost of manual labeling has risen to $1.50 per record for specialized domains. Which is why transfer learning from foundational models is a more viable path for 70% of businesses.

Cost vs ROI: The 2026 Budgetary Reality

Don't just budget for the build. Executing a machine learning implementation guide requires looking past the initial launch. Timelines vary, but they're mostly driven by data maturity. A team with a clean data warehouse can hit ROI in 6 months, while a team struggling with legacy silos may take 2 years to break even.

| Project Scale | Initial Build Cost | Monthly Maintenance | Typical Payback Period |

|---|---|---|---|

| Small (Niche Automation) | $50,000 - $120,000 | $2,500 - $5,000 | 4 - 8 Months |

| Medium (Departmental AI) | $250,000 - $600,000 | $15,000 - $30,000 | 10 - 14 Months |

| Enterprise (Core Operations) | $1.5M - $4M+ | $80,000 - $150,000 | 18 - 24 Months |

High-growth organizations are currently allocating 15-20% of their total IT budget to AI-driven initiatives, according to the McKinsey State of AI report. The primary cost drivers are GPU compute credits and the high salaries of machine learning engineers. In major tech hubs, those salaries now average $280,000 per year.

When Machine Learning Implementation Is the Wrong Choice

Don't use machine learning if your data volume is below 10,000 records for a supervised learning task. At this scale, a well-crafted heuristic or rule-based system'll outperform a neural network nine times out of ten. Plus, if your problem requires 100% explainability (like specific legal compliance workflows), the probabilistic nature of ML's a liability. If your inference cost exceeds the marginal value of the decision, stick to traditional automation tools like low-code platforms.

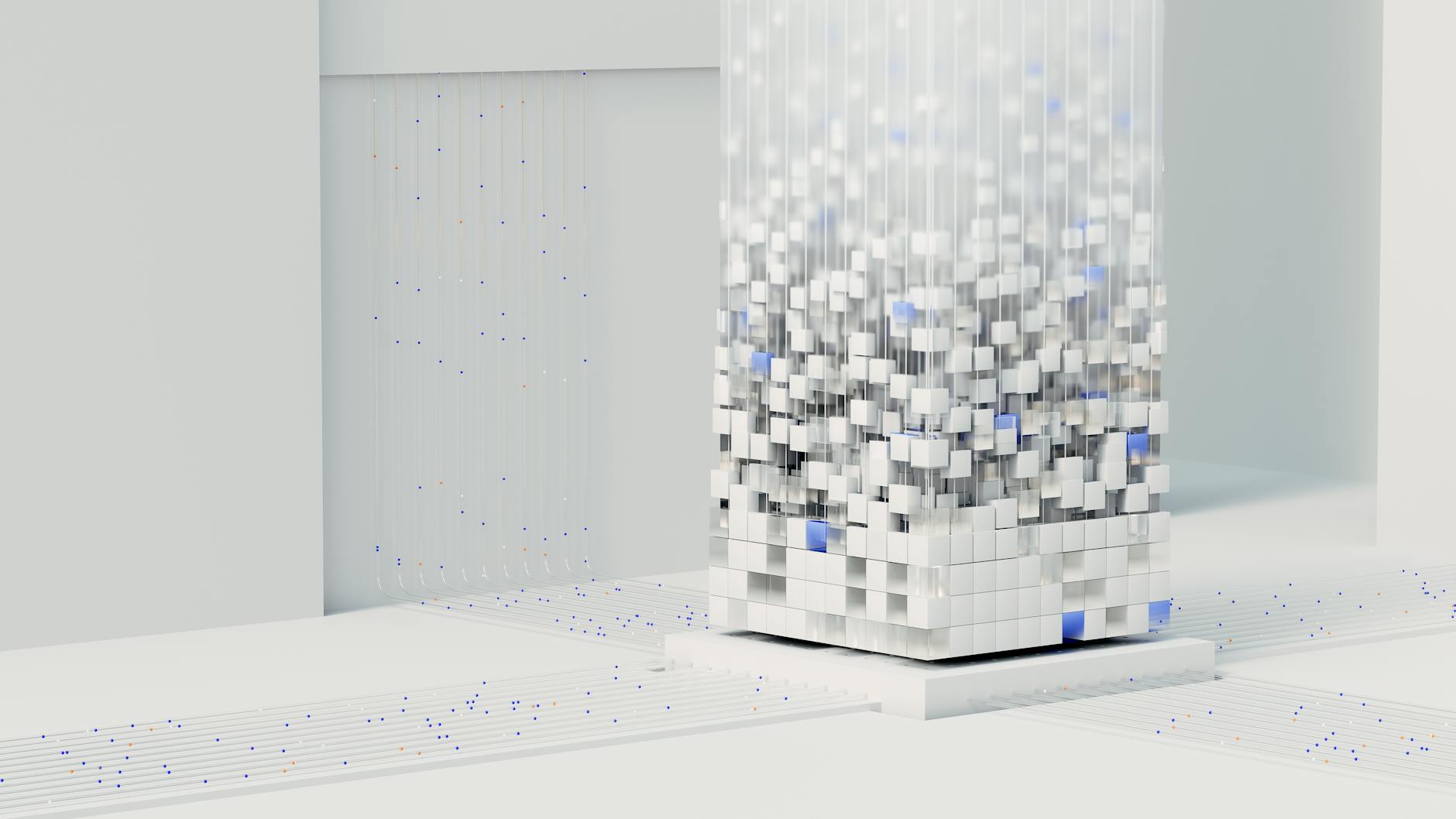

Why Agentic Architectures Outperform Standard RAG

The industry has moved on. In 2026, we've shifted from simple Retrieval-Augmented Generation (RAG) to Agentic AI. While standard RAG's excellent for basic Q&A, it can't execute multi-step tasks. Agentic systems use recursive feedback loops to verify their own outputs before showing them to a user. This approach cuts hallucination rates from 12% in standard setups down to less than 1.5% in agentic workflows.

The performance differences are stark. An agentic workflow can manage complex supply chain disruptions by autonomously contacting vendors and re-routing shipments. A RAG system can only tell you that a problem happened. This capability's powered by compute orchestration that scales GPU resources based on task complexity, a mechanism detailed in recent OpenAI Research papers.

Frequently Asked Questions

How much does it cost to train a custom model in 2026?

For a mid-sized enterprise model using H100-equivalent GPUs, you'll likely spend between $15,000 and $45,000 on raw compute for the initial training. That doesn't include data prep, which often costs even more.

What is the minimum data requirement for predictive modeling?

It varies, but 10,000 high-quality labeled rows is a good baseline for classification. If you're doing generative tasks, you're better off fine-tuning a foundational model with 500-1,000 perfect examples.

How do you prevent model drift in production?

Set up an automated monitoring system to track the Kullback-Leibler (KL) divergence. If the divergence crosses a 0.1 threshold, the system needs to alert you for a manual review or kick off automated retraining.

Can no-code AI tools handle enterprise-level implementation?

They can. Platforms like n8n and Make now have native links for vector databases and model endpoints. They'll handle up to 50,000 task executions per month before you'll need to move to a custom Python setup.

What is the average latency for an agentic AI workflow?

Most complex workflows in 2026 take 2 to 5 seconds. This is because they involve multiple 'thought' steps and API calls. To keep users happy, practitioners use streaming responses to keep perceived latency under 200ms.

Is 85% project failure still the industry standard?

Unfortunately, it is. While the IBM AI Insights report shows we have better tools, the failure rate's still high because organizations ignore the 'plumbing' — like data governance and MLOps.

Conclusion

Successful execution of a machine learning implementation guide requires a shift in mindset. Stop viewing AI as a product; it's a core capability. The winners in 2026 aren't the ones with the biggest models. They're the ones with the most resilient data pipelines and the fastest feedback loops. Before you invest in a full-scale build, run a two-week feasibility study on a subset of your data. If your model can't beat a simple baseline by 15%, the full build isn't worth the investment yet.