Most operations leaders sink six figures into custom Business applications of machine learning expecting 20% efficiency gains overnight. Usually, they're disappointed. They find their models spitting out "hallucinated" nonsense or getting stuck in a permanent testing loop. This happens because they focus on the shiny algorithm instead of the messy data pipeline. Specifically, they miss the feature engineering needed to make that math work for a specific industry. By May 2026, the gap between winners and losers isn't about who has the smartest model. It's about who built a MLOps framework solid enough to handle real-world data swings.

How Business Applications of Machine Learning Actually Work in 2026

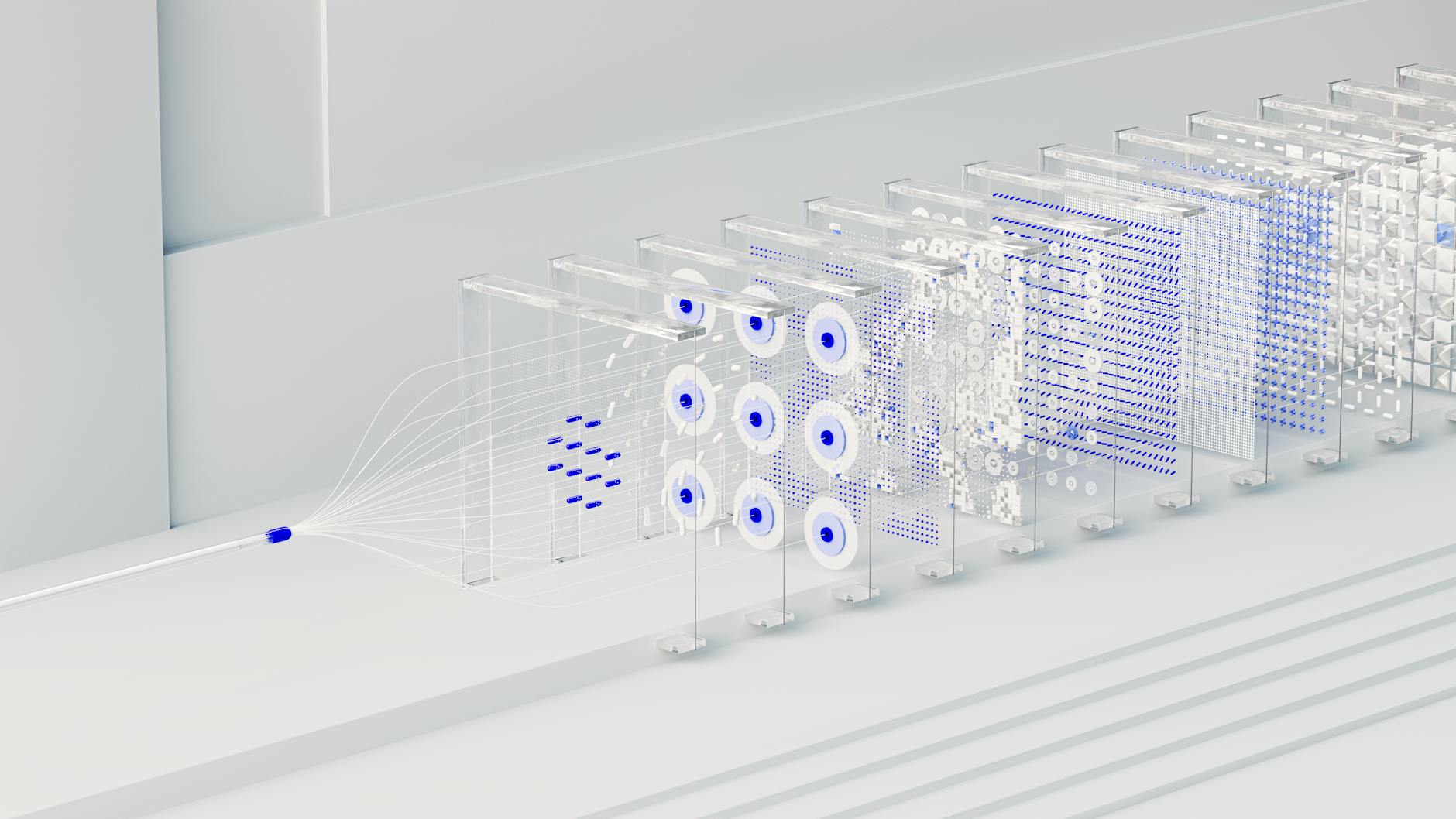

Forget the idea of a single, giant neural network doing everything. In the current space, high-performing teams use a composable AI architecture. Think of it as a team of experts rather than one genius. You're orchestrating multiple specialized Large Language Models (LLMs) and traditional regression models through a central API layer. It's a cleaner way to work. The whole thing relies on Retrieval-Augmented Generation (RAG) to ground outputs in your own company data. This makes sure the logic stays tethered to reality, not just random training data from the internet.

A production-ready workflow usually kicks off with data ingestion from fragmented spots like ERP systems, CRM logs, and IoT sensors. This data gets cleaned and vectorized in real-time. This lets vector databases give context to the machine learning models in milliseconds. Still, this is where most projects break. If you force the system to process too much raw data without pre-filtering, inference latency spikes. Your costs go up and your users get frustrated. A smart setup uses edge computing for the initial heavy lifting. Only the essential bits go to the cloud. It's more efficient.

In my experience, the difference between a win and a total flop is the "Shadow Mode" phase. This is where your new model runs quietly alongside your current process for 30 days. You need to see how it stacks up against your people before you give it the keys.

Take a mid-sized logistics network moving 5,000 shipments a day. A bad setup tries to predict delays using just old averages. It doesn't work. A solid 2026 setup pulls in weather feeds, port congestion, and computer vision from warehouse cameras. This lets you adjust routes on the fly. The result is clear. By using multimodal inputs, you can cut idle time by 18%. The old way? It misses things like local strikes or equipment failures every single time.

Quantifying the Impact: Measurable Benefits of Business Applications of Machine Learning

- 22% reduction in Customer Acquisition Cost (CAC): Stop guessing. By using predictive analytics to find high-intent leads, your marketing team stops burning cash on people who won't buy.

- 15% revenue uplift via dynamic pricing: Retail platforms use reinforcement learning to tweak prices based on what the competition is doing (and local demand spikes). It's an instant margin boost.

- 40% decrease in manual data entry: This is a big deal. Automating data extraction from messy invoices using natural language processing saves accounting about 12 hours a week.

- 30% improvement in equipment uptime: Industrial teams use predictive maintenance to spot failures 72 hours before they happen. No more unplanned downtime.

Real-World Use Cases

Hyper-Personalized E-commerce Experiences

Online stores are finally moving past those basic "people also bought" lists. Modern customer segmentation uses unsupervised learning to group users based on tiny behaviors. How fast do they scroll? How long do they hover over a specific color? When you plug this into a ChatGPT integration, you can generate a custom product description for every single visitor. One luxury brand found that this increased their add-to-cart rate by 9.4%. That's a lot better than static copy written by a human months ago.

Healthcare Diagnostic Support

In the medical field, supervised learning helps radiologists by pre-screening thousands of images. The goal isn't to replace the doctor. It's about speed. According to IBM AI Insights, these tools cut screening time by 35%. This lets specialists spend their energy on the hard cases. The real secret is the data labeling. Specialists give the model "gold standard" feedback, which keeps the false-positive rate low. It's a constant loop of improvement.

Supply Chain Demand Forecasting

Logistics companies are now using workflow automation and deep learning to see inventory needs six months out. They aren't just looking at sales. They're doing sentiment analysis on social trends and global economic markers. This helps them catch a demand spike before the orders even come in. One electronics distributor saved $1.2 million in 2025 because they didn't overstock parts that were about to become obsolete. The model saw the shift toward sustainable materials coming a mile away.

What Fails During Implementation

The biggest reason projects die in 2026 is model drift. The world changes, but the model stays the same. If you trained a fraud detection system on 2024 spending, it won't catch a 2026-style attack. It's a "silent failure." The model keeps giving high-confidence answers that are completely wrong. You need an automated retraining pipeline. If your accuracy drops below a 95% threshold, the system should trigger a refresh automatically. Don't wait for a human to notice.

The real issue is over-engineering. Teams spend 8 months building a custom neural network when a simple prompt or a basic linear regression would have finished the job in 2 weeks.

Don't ignore algorithmic bias. If your hiring model is trained on old data from a time when your company wasn't diverse, it'll keep rejecting great candidates. In 2026, this isn't just a PR problem—it leads to massive fines. You've got to use explainability layers like SHAP or LIME. You need to know *why* the machine made a choice. Make sure the logic is based on actual merit, not just proxy variables for things like age or gender.

Cost vs ROI: What the Numbers Actually Look Like

What you pay depends on how fast you need answers and how messy your data is. Here's what I've seen consistently regarding 2026 project costs.

| Project Scale | Initial Investment | Annual OpEx | Typical Payback Period |

|---|---|---|---|

| Small (No-code/SaaS integration) | $10,000 - $25,000 | $2,000 | 4 - 6 Months |

| Medium (Custom API & RAG) | $75,000 - $200,000 | $15,000 | 10 - 14 Months |

| Large (Proprietary Training/MLOps) | $500,000+ | $100,000+ | 18 - 24 Months |

Why do these timelines vary? Data hygiene. If you have a clean, central warehouse, you'll hit ROI three times faster. If you have to spend six months cleaning up siloed databases, you're going to wait. Also, no-code AI has made things much easier for smaller shops. You can now build smart workflows without a massive data science team. As the McKinsey State of AI points out, the best ROI is in "middle-office" work. That's where machine learning handles the high-volume, boring decisions.

When This Approach Is the Wrong Choice

Machine learning isn't a magic wand. If you have fewer than 5,000 clean records, your model won't be statistically sound. It'll just be overfitting to noise. Also, if you need 100% transparency—like in legal or high-stakes safety roles—the "black box" of deep learning is a liability. Sometimes the cost of one mistake is just too high. In those cases, stick to traditional software. It's safer.

Why Certain Approaches Outperform Others

For 90% of business tasks, Retrieval-Augmented Generation (RAG) beats fine-tuning. Fine-tuning is like asking a student to memorize a whole textbook. RAG is like giving them an open-book test with the whole library available. It's just smarter. RAG systems usually show a 60% reduction in factual errors. Plus, they're about 80% cheaper to keep running because you're just updating a database, not retraining the whole brain.

You should also look at Small Language Models (SLMs). They're beating the big models for narrow tasks like coding or sentiment analysis. They're faster and can run on your own hardware, which is great for privacy. Using an SLM for a specific job usually leads to a 5x reduction in compute costs. You don't need GPT-5 to categorize an email. It's overkill.

Frequently Asked Questions

What is the minimum data requirement for a custom machine learning model?

It depends. But for most supervised learning, you'll want 5,000 to 10,000 labeled examples to get past 85% accuracy. If you don't have that, try synthetic data generation or just use RAG with a pre-trained model. It's often the better path anyway.

How much does it cost to maintain a machine learning application?

Plan on 15% to 25% of your build cost every year. You've got to pay for API credits and cloud time. Plus, someone needs to keep an eye on model drift to make sure the performance doesn't tank over time.

Can small businesses use machine learning without a data science team?

Absolutely. You can use no-code AI tools like Akkio or Zapier. They're great for building predictive analytics and smart workflows by just plugging in your existing data. You don't need a PhD for that.

What is the biggest risk of using machine learning in business?

The top risk is algorithmic bias. It can lead to unfair outcomes and legal trouble. Make sure you use explainability tools and audit your models regularly. You need to be sure the machine is following the rules.

How do I know if my business problem needs machine learning?

Is the task repetitive? Does it involve a mountain of data? Do you need a "maybe" instead of a "yes/no" (like "How likely is this person to quit?")? If so, you're looking at a prime candidate for Business applications of machine learning.

Which is better: building a custom model or using an API?

For 95% of use cases, use an API. Combining something from OpenAI Research with your own data via RAG is usually the way to go. Only build from scratch if you're in a super niche field where no models exist yet.

Conclusion

Winning with Business applications of machine learning in 2026 isn't about complexity. It's about how well it fits into your day-to-day work. Start small. Use high-quality data and pick one metric you want to fix. Before you go all-in on a custom build, try a 14-day pilot with a no-code AI tool. It'll show you exactly where your data gaps are before you waste a fortune.